Continuous Testing with

QuerySurge DevOps for Data

Learn how QuerySurge automates the validation & testing at every stage of your DataOps pipeline.

The modern enterprise operates within a digital paradigm where the competitive boundary is defined by the speed and accuracy of its data-driven decision-making.

As organizations transition from static business intelligence to dynamic, real-time analytics and artificial intelligence, the underlying data pipelines have become increasingly complex, fragile, and voluminous. This complexity has necessitated the rise of DataOps, a collaborative data management practice designed to improve the communication, integration, and automation of data flows between data managers and data consumers.1

Modeled after the successes of DevOps in software engineering, DataOps seeks to transform data management from a bespoke, manual "craft" into an industrialized "data factory" capable of delivering high-quality insights at unprecedented scale.3

Within this rapidly expanding market, which is projected to reach USD 10.9 billion by 2028, QuerySurge has emerged as the preeminent solution for enterprise data validation, leveraging artificial intelligence and deep CI/CD integration to ensure the integrity of the global data supply chain.1

DataOps represents a holistic mindset that transcends specific technologies, combining the methodologies of Agile development, DevOps, Lean manufacturing, and Total Quality Management (TQM) to optimize the data lifecycle.3

The historical origin of the term traces back to 2014, when it was introduced to describe the application of software engineering best practices to the unique challenges of data analytics pipelines.3

Unlike traditional data management, which often suffered from siloed departments and long waterfall delivery cycles, DataOps emphasizes an incremental approach to innovation, continuous stakeholder collaboration, and the pervasive use of automation to eliminate bottlenecks.7

The Agile discipline within DataOps focuses on breaking down silos between data engineers, data scientists, and business stakeholders. By utilizing iterative development cycles, self-organized teams can work closely with subject matter experts to ensure that data products—such as reports, models, and dashboards—accurately reflect evolving business requirements.3 DevOps provides the technical infrastructure for this agility, contributing essential practices such as version control, continuous integration, and continuous deployment (CI/CD) to the data domain.3 In a DataOps environment, data pipelines are treated as code, allowing for automated testing, rapid deployment, and the ability to roll back changes when defects are detected.9

Framework Aspect |

DataOps Application |

DevOps Origin |

|---|---|---|

Primary Objective |

Ensuring reliable, timely delivery of data for decision-making.9 |

Rapid, high-quality software application delivery.9 |

Core Practices |

Data automation, observability, and pipeline orchestration.7 |

CI/CD, automated unit testing, and infrastructure as code.9 |

Key Stakeholders |

Data engineers, scientists, analysts, and business owners.8 |

Software developers, IT operations, and QA professionals.9 |

Cultural Impact |

Agility in data workflows and cross-functional transparency.3 |

Collaboration between development and operations teams.3 |

The integration of Lean manufacturing principles into DataOps is perhaps the most transformative conceptual shift for enterprise data management. This approach views the data pipeline as a production line in a "data factory," where raw data enters at one end and refined analytic insights emerge at the other.3 Lean thinking emphasizes the maximization of productivity through the simultaneous minimization of waste.11 In the context of a data pipeline, waste (muda) can manifest in eight distinct forms, each of which contributes to operational friction and decreased time-to-value.11

Type of Waste |

Data Pipeline Manifestation |

Operational Impact |

|---|---|---|

Over-production |

Generating reports or datasets that no one uses.11 |

Wasted storage and compute resources.12 |

Waiting Time |

Stalled insights due to slow ETL jobs or manual approvals.11 |

Delays in critical business decision-making.12 |

Transportation |

Unnecessary movement of data between disparate systems.11 |

Increased latency and higher risk of data corruption.11 |

Over-processing |

Complex transformations or cleansing steps that add no value.12 |

Unnecessary architectural complexity and technical debt.13 |

Excess Inventory |

Storing vast amounts of unrefined data "just in case".11 |

High cloud storage costs and increased data gravity.11 |

Unnecessary Motion |

Manual SQL coding and repetitive pipeline configuration.12 |

Reduced engineering productivity and higher burnout.1 |

Defects |

Poor data quality requiring manual cleaning or re-runs.11 |

Loss of trust in analytics and potential financial loss.13 |

Under-utilized Talent |

Experts spending 40% of time on manual validation.15 |

Strategic AI/ML initiatives are deprioritized.15 |

By applying Lean principles, DataOps teams strive for "flow," ensuring that manufacturing and fulfillment happen at or near the rate of demand.11 This is supported by "pull" systems, where data production is triggered by actual business needs rather than arbitrary schedules.4

Total Quality Management (TQM) informs the DataOps focus on continuous testing and monitoring. This discipline advocates for the "jidoka" principle, where the pipeline is designed to automatically detect abnormalities and alert operators for error avoidance (poka-yoke).4 Instead of treating quality as a final inspection step, DataOps embeds automated quality gates at every stage of the pipeline—ingestion, staging, transformation, and reporting.3 This ensures that data defects are caught as close to the source as possible, preventing the "silent failure" of pipelines where incorrect data reaches executive dashboards undetected.1

To codify these diverse influences, the DataOps community has established a set of 17 core principles that serve as the philosophical foundation for modern data engineering.17 These principles emphasize the primacy of customer satisfaction through the early and continuous delivery of valuable analytic insights, often measured in minutes to weeks rather than months or years.10

A fundamental tenet is that "Analytics is Code," meaning that the tools used to access, integrate, and visualize data generate configurations and code that must be version-controlled and treated with the same rigor as traditional software.17 This leads to the requirement for "Reproducibility," where every component of the pipeline—including data, hardware configurations, and code—is versioned to allow for consistent results across different environments.17

Furthermore, the manifesto advocates for "Disposable Environments," enabling team members to experiment in isolated, safe technical environments that mirror production without incurring high costs or risks.17 This supports the goal of "Reducing Heroism," where the reliance on individual technical brilliance is replaced by sustainable, scalable processes and automated systems.17 The ultimate objective is to achieve "Process-Thinking," viewing the beginning-to-end orchestration of data, tools, and code as the key driver of analytic success.17

(To expand the sections below, click on the +)

Enterprise Challenges in the Data Pipeline

The importance of DataOps is underscored by the systemic failures of traditional data management in the face of modern architectural complexity. Enterprises today manage data across increasingly fragmented ecosystems, where data flows through "tortuous routes" before reaching its final destination.18

Traditional database quality assurance (QA) methods, such as manual "stare and compare" or the use of Excel-based sampling, are inherently incapable of handling the scale of modern data lakes and warehouses.19 Manual methods typically verify less than 1% of total records, leaving 99% of the data unvalidated and prone to hidden defects.19 In environments where a single migration might involve 10 billion records, manual comparison is not only inefficient but functionally impossible.23

Modern data pipelines are highly sensitive to upstream changes. A minor schema update in a source system or a modification in an external API feed can trigger a cascade of failures downstream, resulting in broken analytics dashboards or inaccurate machine learning predictions.1 Without automated observability and quality checks, these discrepancies may remain hidden until they impact critical business operations.1 DataOps addresses this by promoting "Data Contracts" and layered interfaces that provide a clear separation of concerns and well-defined interfaces for data integration.6

The business impact of poor data validation is measurable and severe. Gartner estimates that the average organization loses USD 14.2 million annually due to poor data quality, with 40% of all business initiatives failing to achieve their targeted benefits because of underlying data issues.14 Beyond direct financial loss, inaccurate data leads to misinformed strategic decisions, operational delays, failed audits, and the erosion of trust in analytics platforms.14 For C-level executives making "big bets" on the future of their firms, the lack of validated data is akin to navigating with an inaccurate map; the destination will inevitably be incorrect.14

In response to these challenges, QuerySurge has established itself as the leading enterprise-grade platform for automated data validation and ETL testing.26 It provides a comprehensive solution that spans the entire data ecosystem—from ingestion and transformation to reporting and AI integration.18

QuerySurge’s market dominance is rooted in its unparalleled connectivity, offering over 200 pre-built data connectors.28 This allows the platform to serve as a high-precision "forensic tool" for ETL specialists, providing a bridge to nearly any legacy database, NoSQL store, or cloud-native environment.28

Data Store Category |

Supported Technologies |

|---|---|

Big Data Platforms |

Hadoop (HDFS), Apache HBase, Cassandra, MongoDB, DynamoDB.22 |

Cloud Data Warehouses |

Snowflake, Databricks, Amazon Redshift, Google BigQuery, Azure Synapse.22 |

Relational Databases |

Oracle, MS SQL Server, IBM DB2, MySQL, PostgreSQL, Netezza.28 |

Enterprise Applications |

SAP Systems, Salesforce, Microsoft Dynamics, Oracle ERP.29 |

File Systems & Formats |

S3, Azure Data Lake, Flat Files (CSV), JSON, XML, Excel, Parquet.22 |

Web Services & APIs |

SOAP, RESTful, GraphQL.28 |

This breadth of connectivity ensures that QuerySurge can validate the "entire pipeline," testing each stage of the data journey—ingestion, staging, transformation, and machine learning preparation—to ensure accuracy before data moves forward.18

QuerySurge is distinguished by its "DevOps for Data" module, which features a robust RESTful API with over 60 API calls and full Swagger documentation.28 This architecture allows technical teams to embed data testing directly into their existing delivery pipelines.29 Tests can be triggered immediately following an ETL job or code commit, with results automatically piped back to alerting tools, QA management systems (like Jira), and communication platforms (like Slack or Teams).28

By treating data validation as an automated component of the CI/CD cycle, QuerySurge helps organizations shift data quality "left," catching defects earlier in the development lifecycle when they are less expensive to remediate.18 This integration supports a "ship and iterate" culture, allowing data teams to deploy changes quickly with the confidence that any regressions will be instantly detected.8

The most significant advancement in the QuerySurge platform is the introduction of QuerySurge AI, a generative AI engine designed to accelerate smarter data validation and eliminate the manual effort associated with test creation.28

(To expand the sections below, click on the +)

Mapping Intelligence: Converting Design to Execution

A primary bottleneck in ETL testing is the manual coding of validation scripts from mapping documents. On average, a single data mapping requires one hour of manual labor to code into two queries (one at source, one at target).33 QuerySurge AI’s "Mapping Intelligence" module extracts mappings directly from Excel documents and automatically generates the corresponding SQL tests in the native language of the data store.33 This technology allows for the creation of 200 tests per hour, providing a massive reduction in the time needed for test development.23

Query Intelligence and Low-Code Democratization

"Query Intelligence" complements this by enabling less-technical users—such as business analysts or subject matter experts—to contribute to the validation process.33 Through a natural language interface, users can generate complex SQL queries and transformation logic without extensive programming knowledge.28 This democratization of data testing ensures that those with the most intimate understanding of the business rules can verify the accuracy of the data pipelines.15

QuerySurge AI offers two implementation paths to accommodate the diverse security and performance requirements of global enterprises.32

Implementation Feature |

QuerySurge AI Cloud |

QuerySurge AI Core |

|---|---|---|

Deployment Type |

Externally hosted, cloud-based LLM.32 |

On-premises, deployed within local infrastructure.32 |

Infrastructure |

Minimal setup, no local hardware required.32 |

Requires local server with GPU or CPU execution.32 |

Data Security |

Data processed externally in secure environment.32 |

100% of data remains within organization’s network.32 |

Performance (100 Mappings) |

~5 minutes 42 seconds.32 |

~6 minutes 28 seconds (on GPU).32 |

Control & Policy |

Managed by QuerySurge for speed.32 |

Full organizational control over security policies.26 |

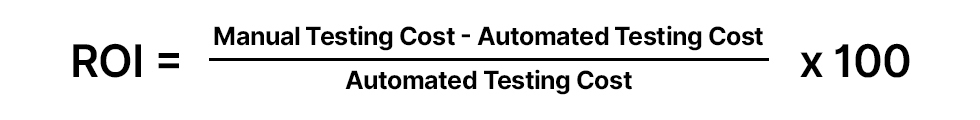

The transition from manual to automated data testing is supported by a compelling financial model. A typical enterprise project, calculated over a three-year period with a blended resource rate of USD 95 per hour, reveals a proven ROI of 877%.23

This ROI is driven primarily by the drastic reduction in labor costs for test design and analysis.23 The mathematical justification for this return is derived from the following comparative analysis:

In this model, the savings are not merely theoretical; they represent a fundamental reallocation of engineering talent toward higher-value activities, such as machine learning model refinement and strategic data architecture design.15

The efficacy of QuerySurge is validated through its application in high-stakes, large-scale enterprise environments.

Atos, a global leader in digital transformation, utilized QuerySurge to validate a massive database migration for a leading telecommunications company.23 The project involved migrating over 10 billion records from an Oracle environment to an enterprise-level full-stack application within a tight three-month timeline.23

Project Scope |

Validation Metric |

|---|---|

Total Record Count |

10 Billion+.24 |

Largest Table Tested |

4.8 Billion Records.24 |

Total Test Suites |

421 QueryPairs.23 |

Actual Records Validated |

9.2 Billion (92% coverage).24 |

Average Table Size |

351 Million Records.24 |

The Atos team categorized tables by priority and criticality, establishing connections to both source and target systems through QuerySurge.24 Automated validations quickly identified discrepancies, which were resolved by the development team and re-verified through immediate re-runs of the QueryPairs.23 The project provided the client’s stakeholders with the confidence to proceed with a "big bang" migration, a success that would have been impossible with traditional sampling methods.24

Beyond telecommunications, QuerySurge is utilized by the world’s leading firms across diverse sectors to ensure data integrity.23

QuerySurge occupies a specialized position in the DataOps market, distinguishing itself through "Deep Forensic Precision" rather than broad observability.29 While "Data Observability" tools prioritize a "single pane of glass" view for monitoring freshness and volume metrics, QuerySurge is designed for high-complexity tasks like validating Slowly Changing Dimensions (SCD) and maintaining rigorous Data Lineage Tracking.21

(To expand the sections below, click on the +)

QuerySurge versus Tricentis and iceDQ

In the broader market, QuerySurge is often evaluated against Tricentis Data Integrity and iceDQ.29 Tricentis offers a scriptless, no-code approach suitable for functional testing, but QuerySurge’s specialized Query Wizards and AI-powered SQL generation provide a level of depth in ETL validation that is often required for massive, complex migrations.29 iceDQ is recognized for its data reliability and monitoring, yet QuerySurge’s unique BI Tester module—which directly validates report accuracy in platforms like Power BI and Tableau—remains a critical differentiator for organizations focused on end-user data trust.31

QuerySurge versus Qyrus Data Testing

A comparison with Qyrus highlights the specialized nature of QuerySurge’s toolset. While Qyrus focuses on the application layer and API validation (REST/SOAP/GraphQL), QuerySurge dominates the deep ETL layer with its 200+ data store connections.29 QuerySurge is preferred by technical teams who manage their data integrity as "code," utilizing its 60+ API calls for surgical control within the CI/CD pipeline.29

More competitive analyses can be found here>>

As the data landscape continues to evolve, several emerging trends will redefine the role of DataOps and the evolution of platforms like QuerySurge.

(To expand the sections below, click on the +)

Multi-Cloud and Hybrid Interoperability

By 2026, multi-cloud strategies will become the standard for enterprises, with workloads distributed across AWS, GCP, and Azure to optimize cost and performance.35 DataOps must support seamless data portability and real-time governance across these diverse environments.5 QuerySurge’s cloud-ready architecture, supporting over 200 sources across all major cloud providers, ensures its continued relevance in these hybrid ecosystems.26

AI-Driven Autonomous Pipelines

The next phase of DataOps involves the rise of "intelligent" pipelines. AI will move beyond test generation to autonomous anomaly detection, root cause analysis, and self-healing systems.5 Machine learning will optimize ETL job scheduling based on real-time workload patterns, ensuring resource efficiency and predictive failure prevention.5 QuerySurge’s ongoing investment in generative AI modules like Mapping and Query Intelligence positions it at the forefront of this shift toward autonomous data quality.33

Convergence of Frameworks: DataOps, DevOps, and MLOps

The boundaries between these once-distinct frameworks are showing signs of convergence.5 Organizations are moving toward centralized business workflows where data pipelines, application development, and machine learning models are integrated into a single operational framework.5 DataOps serves as the critical enabler of "AI-ready data," ensuring that the massive datasets required for generative AI training are accurate, unbiased, and compliant with evolving regulations like GDPR and HIPAA.5

The industrialization of data management through DataOps is no longer an optional innovation but a strategic imperative for survival in a data-centric economy. Enterprises can no longer afford the financial and operational risks of "bad data" or the inefficiencies of manual testing. By adopting the principles of Agile, Lean, and DevOps, and leveraging the market-leading capabilities of QuerySurge, organizations can transform their data pipelines from a source of fragility into a reliable strategic asset.

QuerySurge’s ability to provide 100% data coverage, integrate seamlessly into CI/CD pipelines, and harness the power of artificial intelligence to automate complex testing tasks allows enterprises to innovate with confidence. The platform’s proven ROI and track record in validating billions of records demonstrate that it is uniquely equipped to handle the scale and complexity of the modern data factory. As the world moves toward an era of autonomous, AI-driven analytics, the partnership between DataOps methodologies and high-precision tools like QuerySurge will remain the foundation of enterprise digital excellence.1